QwQ-32B-Preview

QwQ-32B-Preview镜像集成高性能开源大语言模型预览版

0

00元/小时

v1.0

QwQ-32B-Preview 项目介绍

镜像简介

QwQ-32B-Preview镜像集成高性能开源大语言模型预览版,基于320亿参数架构优化通用任务处理能力,支持128K长上下文推理与多语言交互,提供完整工具链及开箱即用部署方案,适用于学术研究与应用开发测试。 本镜像是阿里千问系列的高性能开源大语言模型QwQ-32B预览版,基于320亿参数构建,专为高质量的文本生成、智能问答、对话及内容创作而设计。适用于AI研究、开发者原型验证、内容创意生产及复杂语言任务探索等场景,提供免费、开箱即用的前沿大模型体验。

镜像使用方法

QwQ-32B-Preview需要至少64G显存运行,建议配置选择24G 4卡

vllm运行需要修改防火墙,请记得修改防火墙,添加8000端口(vllm默认使用端口为8000)

镜像已安装vllm,您可以使用vllm运行。

vllm serve /model/ModelScope/Qwen/QwQ-32B-Preview/snapshots/1032e81cb936c486aae1d33da75b2fbcd5deed4a/ -tp 4 --api-key test1234

调用的python代码

from openai import OpenAI

client = OpenAI(

api_key=test1234,

base_url=http://{内网/外网 ip}:{端口}/v1

)

model_name = client.models.list().data[0].id

print(use model name: , model_name)

response = client.chat.completions.create(

model=model_name, # 填写需要调用的模型名称

messages=[{role: user, content: 写一篇200字的作文}],

temperature = 1,

)

print(response.choices[0].message.content)

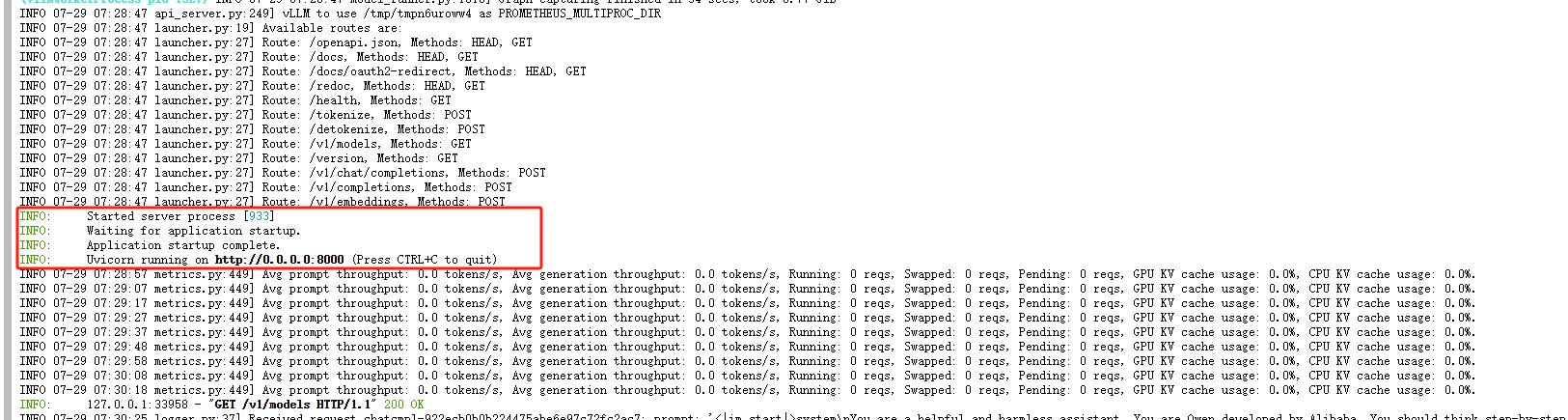

初次运行需要等3分半左右,直到出现,才是加载完成

简介

QwQ-32B-Preview 是由 Qwen 团队开发的一款实验性研究模型,旨在推动 AI 推理能力的进步。作为预览版发布,它展示了有前景的分析能力,但也存在几个重要的局限性:

- 语言混合和代码切换:模型可能会意外地混合语言或在语言之间切换,影响回应的清晰度。

- 递归推理循环:模型可能会进入循环推理模式,导致长时间的回应却没有得出结论。

- 安全性和伦理问题:模型需要增强安全措施,以确保可靠和安全的性能,用户在部署时应谨慎。

- 性能和基准测试的局限性:该模型在数学和编程领域表现优秀,但在其他领域(如常识推理和细致语言理解)上仍有提升空间。

规格:

- 类型:因果语言模型

- 训练阶段:预训练与后训练

- 架构:基于 Transformer,包含 RoPE、SwiGLU、RMSNorm 和 Attention QKV 偏置

- 参数数量:32.5B

- 非嵌入参数数量:31.0B

- 层数:64

- Attention 头数(GQA):Q 40 个,KV 8 个

- 上下文长度:完整的 32,768 个 token

欲了解更多详情,请参考我们的 博客。您还可以查看 Qwen2.5 的 GitHub 和 文档。

系统要求

Qwen2.5 的代码已经集成在最新的 Hugging Face transformers 库中,建议您使用 transformers 的最新版本。

如果您使用的是 transformers<4.37.0,则会遇到以下错误:

KeyError: qwen2

快速开始

以下提供了一个使用 apply_chat_template 的代码示例,展示了如何加载分词器和模型,并生成内容。

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = Qwen/QwQ-32B-Preview

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype=auto,

device_map=auto

)

tokenizer = AutoTokenizer.from_pretrained(model_name)

prompt = How many r in strawberry.

messages = [

{role: system, content: You are a helpful and harmless assistant. You are Qwen developed by Alibaba. You should think step-by-step.},

{role: user, content: prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

model_inputs = tokenizer([text], return_tensors=pt).to(model.device)

generated_ids = model.generate(

**model_inputs,

max_new_tokens=512

)

generated_ids = [

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

]

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

引用

如果您觉得我们的工作对您有帮助,欢迎引用我们的研究。

@misc{qwq-32b-preview,

title = {QwQ: Reflect Deeply on the Boundaries of the Unknown},

url = {https://qwenlm.github.io/blog/qwq-32b-preview/},

author = {Qwen Team},

month = {November},

year = {2024}

}

@article{qwen2,

title={Qwen2 Technical Report},

author={An Yang and Baosong Yang and Binyuan Hui and Bo Zheng and Bowen Yu and Chang Zhou and Chengpeng Li and Chengyuan Li and Dayiheng Liu and Fei Huang and Guanting Dong and Haoran Wei and Huan Lin and Jialong Tang and Jialin Wang and Jian Yang and Jianhong Tu and Jianwei Zhang and Jianxin Ma and Jin Xu and Jingren Zhou and Jinze Bai and Jinzheng He and Junyang Lin and Kai Dang and Keming Lu and Keqin Chen and Kexin Yang and Mei Li and Mingfeng Xue and Na Ni and Pei Zhang and Peng Wang and Ru Peng and Rui Men and Ruize Gao and Runji Lin and Shijie Wang and Shuai Bai and Sinan Tan and Tianhang Zhu and Tianhao Li and Tianyu Liu and Wenbin Ge and Xiaodong Deng and Xiaohuan Zhou and Xingzhang Ren and Xinyu Zhang and Xipin Wei and Xuancheng Ren and Yang Fan and Yang Yao and Yichang Zhang and Yu Wan and Yunfei Chu and Yuqiong Liu and Zeyu Cui and Zhenru Zhang and Zhihao Fan},

journal={arXiv preprint arXiv:2407.10671},

year={2024}

}

@苍耳阿猫 认证作者

认证作者

认证作者

认证作者

镜像信息

已使用9 次

运行时长

0 H

镜像大小

30GB

最后更新时间

2026-02-04

支持卡型

RTX40系20803080Ti309048G RTX40系2080TiH20A800P40A100RTX50系V100SV100S

+13

框架版本

CUDA版本

12.4

应用

JupyterLab: 8888

自定义开放端口

8000

+1

版本

v1.0

2026-02-04